Over Christmas, I hacked together my first hardware/software project. It’s been a long time since I’ve picked up a soldering iron, let alone built something worthy of sharing. It turned out to be a fun little project.

Cause, effect, agency

I got the idea to do a project over Christmas while looking for toys for my son for Christmas. I wanted to find something that would teach him simple cause and effect relationships where he could cause something (e.g. clicking red & blue blocks together) that produced an observable effect (e.g. the blocks changing color to purple). I hoped that I could prime some interest in science. I also really wanted to instill a sense that he can make things happen for himself.

For a two year old, I basically came up empty.

But for a kid slightly older, we’re living in a golden age of hackable creativity. We have 3D printers that are slowly becoming affordable. The Internet makes finding (and sharing!) instructions on building everything from customized furniture to undersea robots easy. Open source and community based tools are getting cheaper and easier to use every year. Several businesses have grown up offering easy instructions and tutorials. (Come on, these look cool, don’t they?)

So, I decided I’d use the four-day Christmas long weekend to hack together a hardware prototype (with help from my wife’s nephew).

The project

For the project, I set out to build a simple thermometer & barometer that I could check from my iPhone. I also wanted it to have some visible indicator that would be fun to look at so my son could check it. As a beginner, I also wanted something I thought I could pull off.

My project centered around an Arduino microcontroller board. An Arduino is an inexpensive open source “electronics prototyping platform” that can be programmed using nearly any computer and a USB cable. Because it’s cheap and freely documented, people have hooked up dozens (if not hundreds) of sensors & other electronics to it.

I had an old kit laying around that I rediscovered after I returned to Fanzter and started working with our resident hardware hacker extraordinaire, Josh. I recommend starting with a starter kit if you’re just getting into electronics projects. You can get several decent options from Adafruit, MakerSHED, or Sparkfun. I have an older Sparkfun Inventor’s Kit, but any from these three vendors will do.

You should go through a few of the tutorials before trying the rest of this to get familiar with the basics of Arduino programming.

Here’s the full parts list:

Tools:

In addition, if you want to get an app running on your iPhone, iPad, or iPod Touch, you’ll need to have a developer account with Apple.

The build out is really simple. The BLE shield snaps onto the Arduino board basically extending the pins and sockets on the Arduino through itself. Just make sure you line up all the pins and sockets. More details at RedBearLab if you want them.

For the LED matrix and the temperature sensor, there’s a little soldering involved. For both the soldering and the basic wiring setup, I followed the instructions in Adafruit’s tutorials:

Make sure you position the LED matrix correctly before soldering it. I got multiple warnings about that from people.

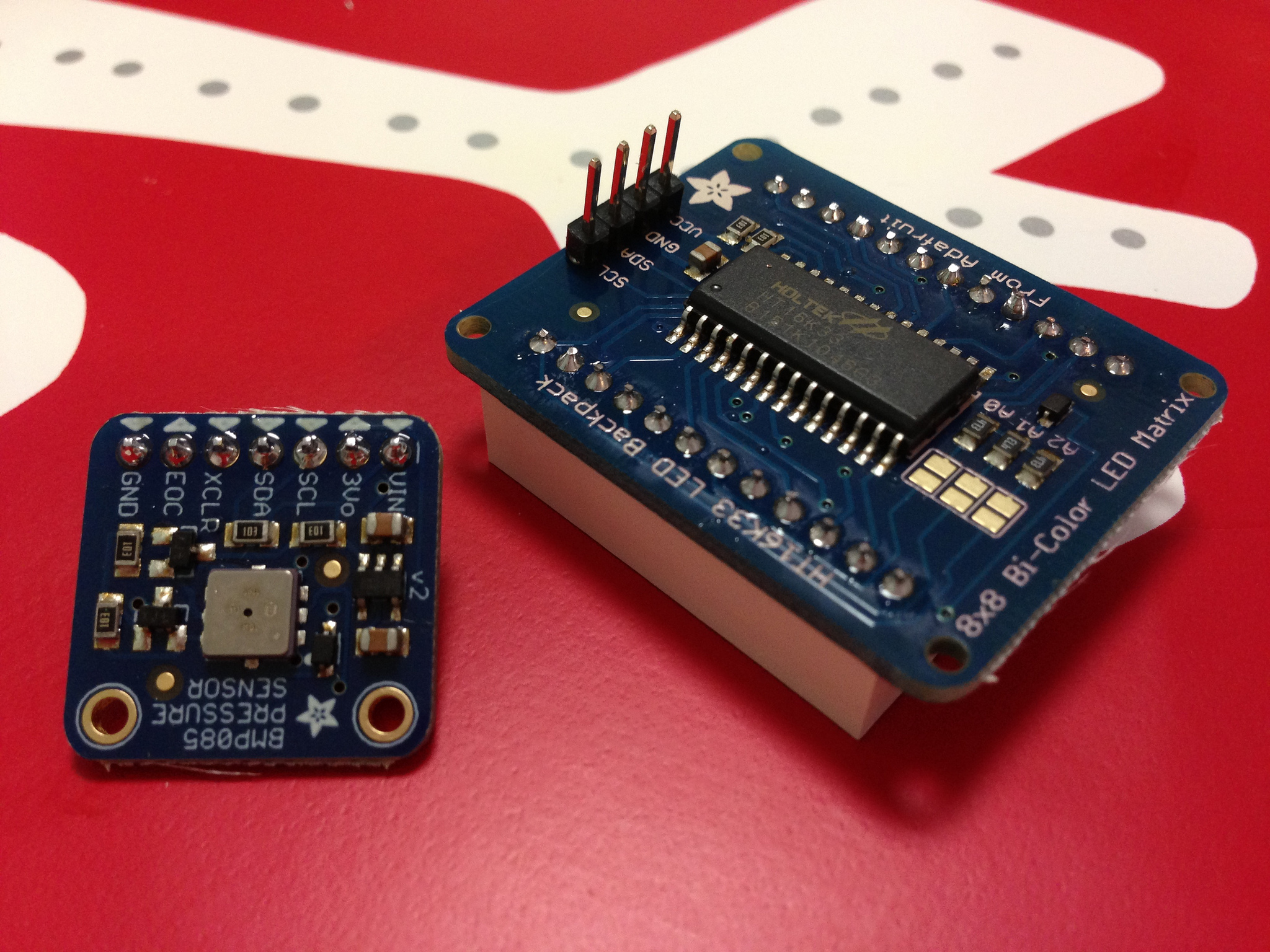

Here are the results of my soldering job:

I’ll admit, I’m proud of how well that came out considering it was my first soldering project in 20 years.

The only difference in my final wiring from the two tutorials is that I hooked the matrix CLK and DAT pins to the same rows containing the CLK and DAT lines from the Arduino to the temperature sensor. In the picture at left, those are the green and orange wires (Click through for a larger view). This works because they both speak a protocol called I2C and have different addresses. [1]

The only difference in my final wiring from the two tutorials is that I hooked the matrix CLK and DAT pins to the same rows containing the CLK and DAT lines from the Arduino to the temperature sensor. In the picture at left, those are the green and orange wires (Click through for a larger view). This works because they both speak a protocol called I2C and have different addresses. [1]

For power and ground, I used the breadboard instead of hooking the sensor or backpack directly to the Arduino board. This is standard, and what the kit tutorials encourage. Just thought I should mention it, since it’s not directly mentioned in the two Adafruit tutorials above.

The next step is programming the Arduino. Rather than walk you through all the details, here’s the source code. Feel free to fork the project and mess around. I’d appreciate any bug fixes if you have them. To use the source code, you’ll need to install the Arduino software & the Ino tool. I used Ino so that the github repository would have everything you need. To run the project, launch Terminal, then type ino build and then ino upload to get the project onto your Arduino. If you want to see the serial output, you can use ino serial -b 57600 to get that on your terminal screen.

I also have the iOS code available if you’d like to play with that. You’ll need to be comfortable with iOS development to use this. I may submit a version to the store if there’s enough interest. Let me know.

That’s it. The finished wiring looks like this:

When lit up, it looks something like this (only 2 readings are displayed – normally there are 8):

The iOS app is really simple:

Drag up to trigger a connect or disconnect. Eventually, I’ll add a pull down to trigger a temp refresh. Otherwise, it polls every minute.

Known issues

The code isn’t perfect and, as I get free time, I’m still cleaning up a few things. Here are some known issues:

-

Bluetooth reliability: For some reason, the iPhone doesn’t seem to disconnect and/or reconnect properly to the device. Pressing the reset button on the BLE shield usually fixes it, which makes me think there’s something wrong in my code.

-

Memory usage: So, the main challenge programming an Arduino is that the device only has about 2K of RAM for the sketch. Yes, that’s two kilobytes. It’s a challenging environment when I’m used to phones that have 256-512MB RAM (or more). My code is definitely not particularly optimized. The program did run out of memory regularly. I think it’s stable now, but it’s not as good as I think I can get it.

Next steps

I’m going to try and hook it up to a Raspberry Pi and put it in an weatherproof enclosure so I can leave it outside. My other goal is to change the LED Matrix to an LED strip like this so I can make it look like an actual thermometer.

I’ll update this with photos if I get that far.

Hope that helps someone out. It was a fun project, and I’m looking forward to working on this more.

1 I2C is a simple two-wire interface to hardware components. I2C allows the Arduino to control multiple devices over just two pins. The Wikipedia page has the gory details, but just know that each device has an address which has to be unique, and then you just wire them up in parallel. The LED Matrix backpack that Adafruit provides provides an I2C interface to the LED matrix, and the Bosch sensor comes on a board that also speaks I2C, so all the work is basically done for you.

That’s more detail than you probably need, but I thought it was neat.↩

Update: Two corrections above, both minor but notable. I accidentally described an Arduino as a microprocessor instead of microcontroller, but then Josh pointed out that it’s really a whole platform because the microcontroller is the specific chip at the heart of the Arduino. It’s a significant detail when you get more advanced because different versions of the Arduino might have different microcontrollers at the heart of the platform.

The other is how I described the I2C wiring in the footnote. The sensor and the LED matrix are wired in parallel, not series. I had a feeling that was the wrong word, but forgot to look that up. Minor detail, but again significant for deeper understanding.

Sorry about both of those. They’re fixed above.